On the latest episode of Inside Tech we map out what the coming months and years might look like as the field of cybersecurity grapples with the emergence of powerful AI https://t.co/1X5SHHYWpU

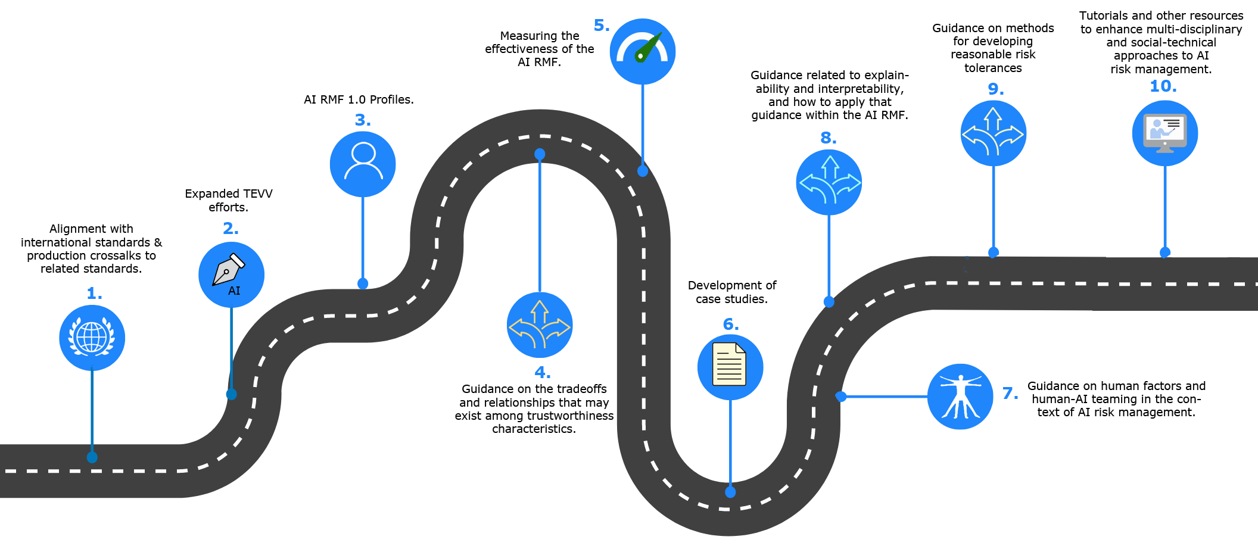

A roadmap-style infographic from NIST’s AI RMF Roadmap that visualizes 10 priority areas (standards alignment, TEVV/testbeds, AI RMF profiles, tradeoffs/guidance, measuring effectiveness, case studies, human factors, explainability, risk tolerances, and tutorials). It directly supports the tweet’s theme by mapping near- to mid‑term priorities and actions cybersecurity teams, regulators, and organizations should take as powerful AI capabilities emerge and reshape threats and defenses.

Source: NIST (National Institute of Standards and Technology)

Research Brief

What our analysis found

The latest episode of the Inspiring Tech Leaders podcast, hosted by PriceRoberts and released on April 19, 2026, dives into a rapidly evolving cybersecurity landscape where artificial intelligence is simultaneously the greatest weapon and the most formidable threat. The episode arrives at a critical moment: as of April 2026, 87% of global organizations have experienced an AI-powered cyberattack in the past year, yet a survey of 500 senior leaders found that while over half rank AI cyber risks among their top three organizational concerns, a mere 7% are currently deploying AI tools to defend against these attacks.

The podcast maps out a future defined by an escalating "AI vs. AI" arms race in cybersecurity. Major technology firms are already staking their positions. Microsoft unveiled Project Ire on August 5, 2025, an autonomous system that reverse-engineers software to detect and block malware with 98% accuracy without human intervention. Meanwhile, Anthropic's Mythos Preview model has identified thousands of zero-day vulnerabilities across major operating systems and browsers, prompting OpenAI to launch GPT-5.4-Cyber, a specialized defensive cybersecurity model, in direct response.

At the heart of the discussion is a fundamental tension: the same AI capabilities that empower defenders — real-time threat detection, automated vulnerability scanning, and intelligent anomaly identification — can be weaponized by attackers for more sophisticated assaults, data breaches, and social engineering campaigns. The episode frames this as the defining challenge for technology leaders in the coming years, asking how the industry can harness AI's defensive potential while mitigating the serious risks of misuse.

Fact Check

Evidence from both sides

Supporting Evidence

AI has created an "AI vs. AI" battlefield

Multiple cybersecurity analyses confirm the landscape has transformed into a dynamic battlefield where both attackers and defenders leverage the same powerful AI technology, validating the podcast's central thesis that the line between defense and offense is blurring.

87% of organizations hit by AI-powered attacks

A global survey found that 87% of organizations experienced an AI-powered cyberattack in the past year, underscoring the urgency of the threat landscape the podcast describes.

AI models are actively identifying vulnerabilities at scale

Anthropic's Mythos Preview has discovered thousands of zero-day vulnerabilities across major operating systems and browsers, while Google's Big Sleep AI focuses on discovering security flaws in code, confirming that AI models can autonomously discover weaknesses and chain lower-severity issues into working exploits.

AI reverse-engineering of software is already operational

Microsoft's Project Ire autonomously reverse-engineers software files to classify malware with 98% accuracy, automating what was previously considered the gold standard in malware analysis and validating the podcast's claims about AI-driven reverse engineering.

The balancing act between empowerment and misuse is widely recognized

Industry experts and IT professionals broadly acknowledge that organizations must balance adopting AI-driven cybersecurity tools with mitigating the inherent risks of those same tools being weaponized, with misuse risks including data breaches, resource exploitation, and advanced social engineering attacks.

Only 7% of organizations defend with AI despite awareness

While over half of 500 surveyed senior leaders rank AI cyber risks among their top three organizational threats, only 7% currently use AI tools defensively, highlighting a critical preparedness gap that supports the podcast's call to action.

Contradicting Evidence

AI may strengthen defense more than offense

Cybersecurity expert Lennart Maschmeyer of Georgia Tech argues that AI strengthens cybersecurity defenses more than it enhances threats, particularly for large-scale, high-stakes cyberattacks, suggesting the podcast's framing of an evenly matched AI arms race may overstate the offensive advantage.

Ambitious AI-driven attacks carry greater risk of failure

Maschmeyer further contends that the more ambitious a cyberattack, the less the payoff from using AI and the greater the risk of something going wrong, due to the unpredictable and non-deterministic nature of generative AI, which introduces a nuance the podcast does not fully explore.

AI dramatically improves defensive capabilities in ways that may outpace attackers

While AI accelerates attacks, it also significantly strengthens cyber defense through intelligent detection that correlates diverse signals, identifies subtle anomalies, reduces false alerts, and automates triage and investigation — suggesting the defensive advantage could ultimately prove more substantial than the threat narrative implies.

Report an Issue

Found something wrong with this article? Let us know and we'll look into it.