The existential risk of artificial intelligence. https://t.co/ZUzBjYHWUs

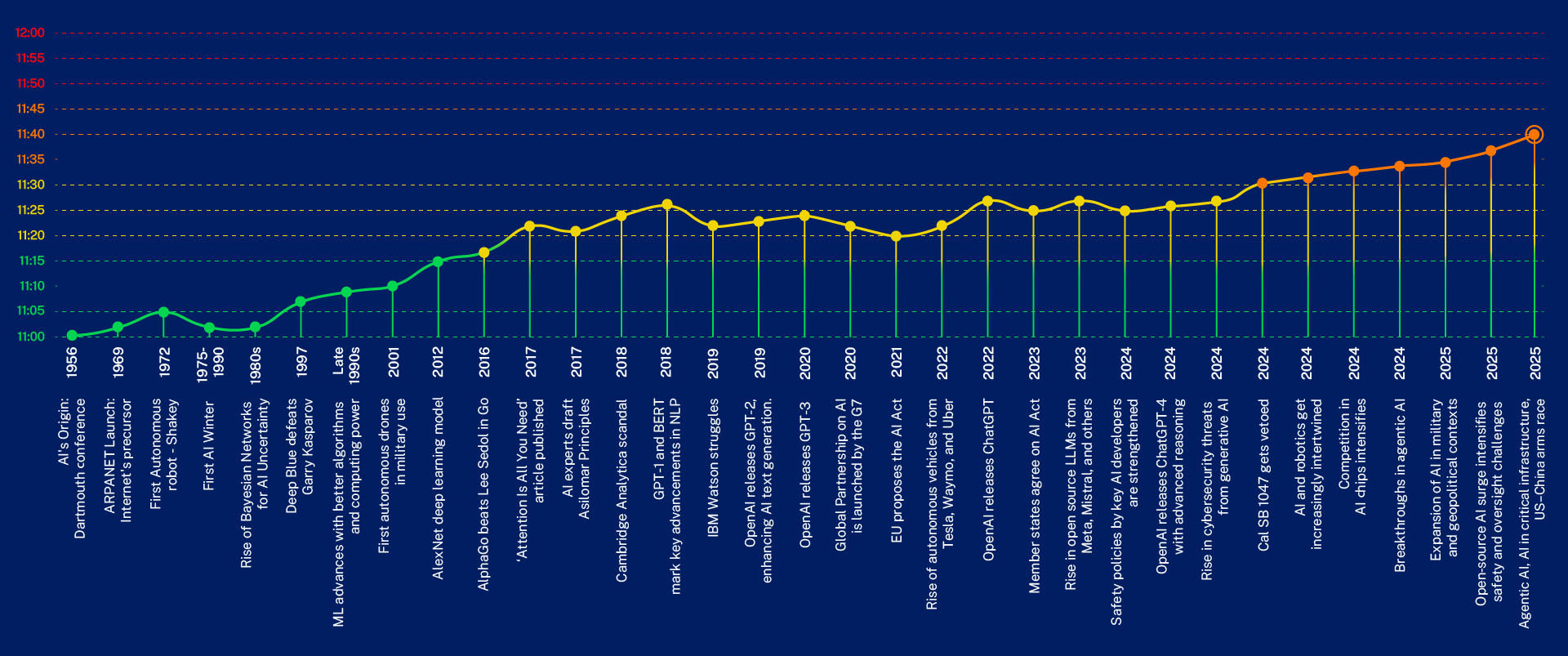

A timeline-style data visualization of IMD’s AI Safety Clock showing ‘minutes to midnight’ across years with annotated AI milestones; it directly illustrates the authors’ measured increase in existential risk from Uncontrolled AGI by linking technological and regulatory events to the clock’s advance.

Source: IMD (IMD Business School, TONOMUS Global Center for Digital & AI Transformation)

Research Brief

What our analysis found

The existential risk posed by artificial intelligence has moved from the margins of academic debate to the center of global policy discussions. A 2022 survey of AI researchers found that the majority believed there is a 10% or greater chance that humanity's inability to control AI will cause an existential catastrophe, while broader surveys of machine learning researchers place the median probability of extinction-level outcomes this century at approximately 5% — a figure commonly referred to as "p(doom)." A 2024 report commissioned by the US State Department went so far as to warn that human extinction was a worst-case outcome of AI development.

The timeline for achieving artificial general intelligence (AGI) appears to be shrinking rapidly. A 2023 survey of 2,778 AI researchers indicated most believed AGI would be achieved by 2040, while an emerging industry consensus, as summarized by New Yorker staff writer Joshua Rothman, suggests scaling could produce AGI by 2030 or sooner. Prominent AI researcher Geoffrey Hinton reportedly revised his personal estimate for general-purpose AI from "20 to 50 years" down to "20 years or less" in 2023. Despite these alarming projections, public awareness remains strikingly low — as of December 2025, only 24% of the U.S. public is aware of AI existential risk.

The debate is far from settled. While hundreds of AI experts signed a May 2023 statement from the Center for AI Safety declaring that mitigating AI extinction risk should be a "global priority alongside pandemics and nuclear war," some policymakers and researchers argue the concerns are speculative. Evidence of a slowdown in AI progress — with large language models no longer showing the exponential improvements seen in 2022 and 2023 — has further complicated the picture, leading some governments to redirect limited resources toward addressing more immediate AI harms.

Fact Check

Evidence from both sides

Supporting Evidence

Expert consensus on significant risk

A 2022 survey of AI researchers found the majority believed there is a 10% or greater chance that uncontrollable AI could cause an existential catastrophe, with broader surveys placing median extinction probability at approximately 5% this century.

Open letter calling for a pause

In March 2023, the Future of Life Institute issued an open letter urging AI labs to pause giant AI experiments, warning about the development of nonhuman minds that might "outnumber, outsmart, obsolete and replace us."

Center for AI Safety extinction statement

In May 2023, hundreds of AI experts and notable figures signed a CAIS statement declaring that mitigating AI extinction risk should be a global priority alongside pandemics and nuclear war. Signatories included Geoffrey Hinton, Yoshua Bengio, and CEOs like Sam Altman and Dario Amodei.

US government acknowledgment

A 2024 report commissioned by the US State Department explicitly identified human extinction as a worst-case outcome of AI development, lending institutional weight to the concern.

The unsolved alignment problem

The challenge of ensuring AI systems behave according to human values remains a major unsolved technical problem. Yoshua Bengio has highlighted that a generally intelligent system could form dangerous subgoals, including self-preservation and environmental control, even when given seemingly benign objectives.

Demonstrated rapid capability gains

Systems like AlphaZero have empirically shown how domain-specific AI can progress from subhuman to superhuman ability extremely quickly, lending credibility to concerns about a rapid intelligence explosion.

Accelerating AGI timelines

A 2023 survey of 2,778 AI researchers indicated most expect AGI by 2040, while industry figures like Geoffrey Hinton have shortened their personal estimates, and some industry leaders project AGI by 2030 or sooner.

Contradicting Evidence

Policymaker skepticism and resource prioritization

Policymakers have increasingly dismissed existential AI concerns as overblown and speculative. At the 2025 AI Action Summit in Paris, officials shifted focus away from existential risks, advocating instead for addressing more urgent and immediate AI harms given limited resources.

Evidence of a slowdown in AI progress

Some evidence suggests improvements in AI technology have recently decelerated, with today's large language models not demonstrating the exponential capability gains seen in 2022 and 2023, undermining assumptions about inevitable rapid progress toward AGI.

Remoteness of true general intelligence

Critics argue that the leap from current narrow AI systems to genuine artificial general intelligence and superintelligence remains poorly understood and potentially far more distant than optimistic timelines suggest, making existential risk projections premature.

Low survey response rates

The often-cited 2022 survey of AI researchers had only a 17% response rate, raising questions about selection bias and whether respondents who chose to participate may have been disproportionately concerned about existential risk.

Immediate harms deserve priority

Many researchers and ethicists argue that focusing on speculative long-term extinction scenarios diverts attention and funding from present-day AI harms — including algorithmic bias, surveillance, labor displacement, and misinformation — that are already affecting millions of people.

Report an Issue

Found something wrong with this article? Let us know and we'll look into it.