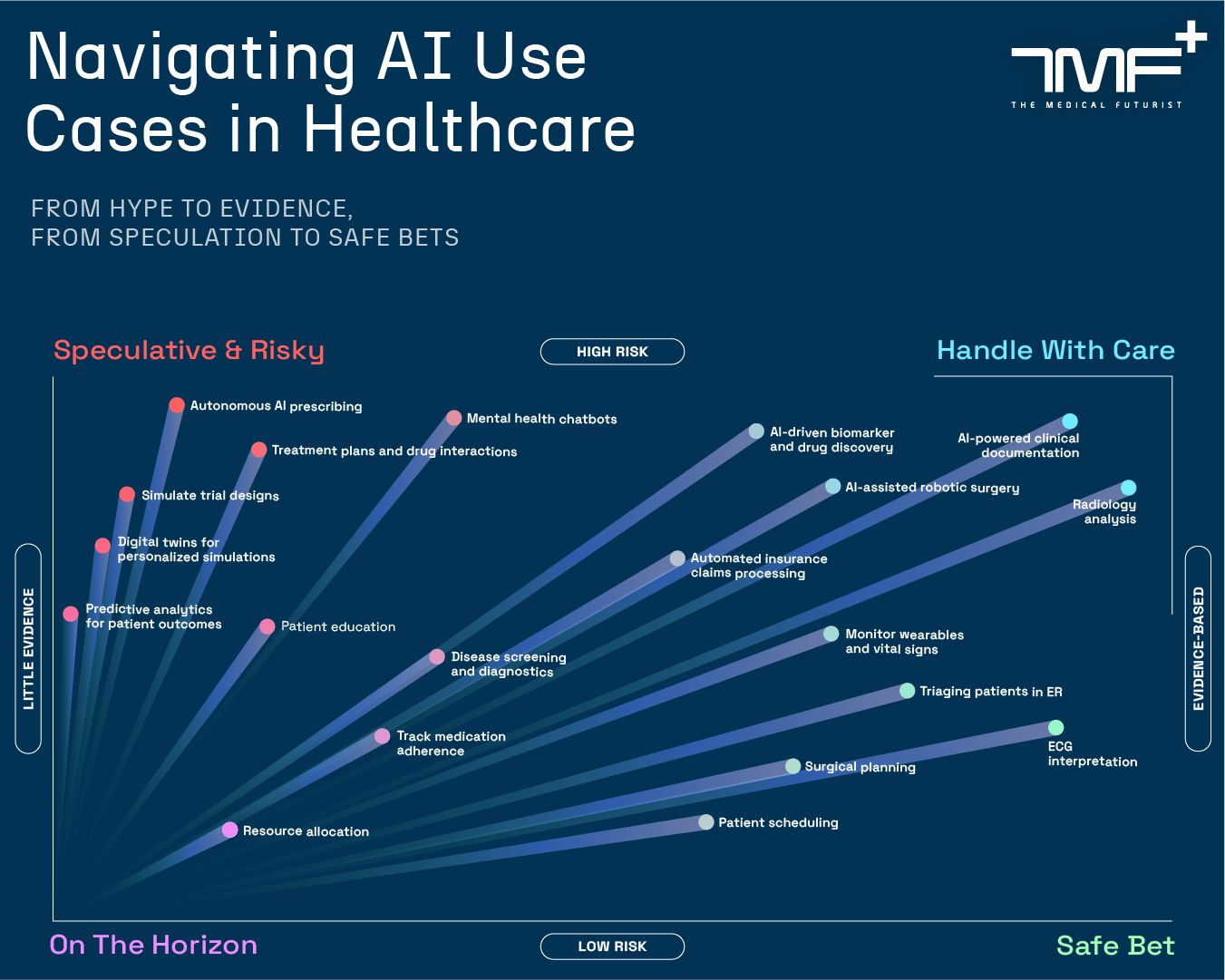

It's striking. Both the under- and over-use of AI in medicine, by patients and doctors, for where there's evidence and where it doesn't exist https://t.co/jo7sFACPRp

An evidence‑vs‑risk infographic that maps 20 AI use cases in healthcare (from 'Speculative & Risky' to 'Safe Bet'), showing which applications are evidence‑based and which remain low‑evidence — directly illustrating the tweet’s point about both under‑ and over‑use of AI by patients and clinicians relative to where evidence exists.

Source: The Medical Futurist

Research Brief

What our analysis found

A growing body of research reveals a striking paradox in how artificial intelligence is being deployed — and avoided — in medicine. On the overuse side, a November 2025 MIT study published in NEJM AI found that when 300 people reviewed medical advice without knowing its source, they consistently rated AI-generated responses as more trustworthy and satisfying than advice written by doctors — even when the AI's guidance was incorrect. Participants indicated a high willingness to follow potentially harmful medical advice and seek unnecessary medical attention based on erroneous AI output. Meanwhile, an April 2026 study by the BMJ Group found that nearly half of responses from five popular AI-driven health chatbots were deemed problematic, with citations frequently incomplete and chatbots observed to fabricate answers rather than admit uncertainty.

At the same time, evidence suggests AI is being underutilized where it could genuinely help. A December 2023 analysis identified professional reluctance, lack of standards, and public disbelief as drivers of AI's underuse in healthcare, calling it a "major threat" first flagged by the European Parliament in 2020. An April 2026 Nature Medicine editorial stated bluntly that "evidence that AI tools create value for patients, providers or health systems remains scarce," warning of "premature implementation and adoption" in some areas while proven applications languish. Only 61% of hospitals using predictive AI tools validated them on local data before deployment, and fewer than half tested for bias, according to a November 2025 American Heart Association advisory.

The consequences of this imbalance are already measurable. A 2024 systematic review of 30 studies found AI utilization was significantly associated with worsening racial disparities — including a machine learning algorithm that led to Black patients experiencing 33% longer wait times and a suicide prediction model that detected only 10% of suicides among Black patients compared to 62% for White patients. A 2025 KPMG survey revealed that 44% of U.S. workers are using AI tools in unauthorized or inappropriate ways, often outside any organizational oversight, underscoring the governance gap fueling this paradox.

Fact Check

Evidence from both sides

Supporting Evidence

Patients trust incorrect AI over correct doctors

A November 2025 MIT study published in NEJM AI found that 300 participants consistently rated AI-generated medical advice as more trustworthy and satisfying than physician-written advice, even when the AI guidance was inaccurate and potentially harmful.

Premature adoption acknowledged by leading journal

An April 2026 Nature Medicine editorial stated that evidence AI tools create value for patients, providers, or health systems "remains scarce," and warned that claims of clinical impact are proliferating without agreement on required evidence standards, leading to premature implementation.

Health chatbots produce problematic and fabricated answers

An April 2026 BMJ Group study found nearly half of responses from five popular AI-driven chatbots to health questions were problematic, with frequent citation errors and outright fabrication of answers.

AI deployed without validation or bias testing

A November 2025 American Heart Association Science Advisory found only 61% of hospitals using predictive AI validated tools on local data, and fewer than half tested for algorithmic bias before deployment.

AI worsens racial health disparities

A 2024 systematic review of 30 studies found significant associations between AI utilization and exacerbated racial disparities, including Black patients facing 33% longer wait times and a suicide prediction model detecting only 10% of suicides among Black patients versus 62% among White patients.

Underuse of AI formally recognized as a threat

A December 2023 analysis identified professional reluctance, lack of infrastructure, and public skepticism as key drivers of AI underuse in healthcare, echoing a concern the European Parliament raised as a "major threat" in 2020.

Young doctors at risk of deskilling from overreliance

A December 2025 BMJ Evidence Based Medicine editorial warned that generative AI overreliance risks eroding critical thinking through automation bias, cognitive off-loading, and deskilling, while institutional policies remain inadequate.

Contradicting Evidence

AI has documented benefits that complicate a purely negative framing

The same December 2025 BMJ Evidence Based Medicine editorial that warned about overreliance also acknowledged "documented and well-researched benefits" of AI in medicine, suggesting the paradox is not simply a story of failure but of misaligned deployment.

Patients voluntarily share more with AI, suggesting real clinical value

A November 2025 white paper from UK digital health company Aide Health found that 26% of asthma patients admitted to AI systems they were not taking medication as prescribed — a higher disclosure rate than in face-to-face visits — indicating AI may unlock clinically useful honesty, even as 60% of patients remain uneasy about AI's role.

Underuse data may be outdated given rapid adoption

A 2019 French survey showing 24% of university hospital centers did not consider AI a priority may no longer reflect current attitudes, as the explosion of generative AI since late 2022 has dramatically accelerated adoption and awareness across healthcare institutions.

Unauthorized AI use reflects demand, not just recklessness

The 2025 KPMG finding that 44% of U.S. workers use AI outside organizational oversight partly reflects a failure of institutions to provide sanctioned tools, suggesting the overuse problem is partly a governance and supply problem rather than purely a user judgment failure.

Report an Issue

Found something wrong with this article? Let us know and we'll look into it.