We invited Claude users to share how they use AI, what they dream it could make possible, and what they fear it might do. Nearly 81,000 people responded in one week—the largest qualitative study of its kind. Read more: https://t.co/tmp2RnZxRm

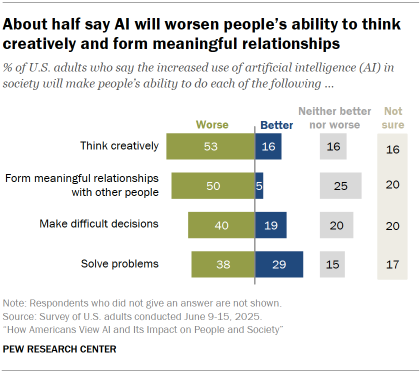

This chart (Sept. 2025) shows how U.S. adults expect AI to affect key abilities—many fear declines in creative thinking and relationships, while some see improvements in problem‑solving. It directly visualizes public hopes and fears around AI, complementing the Claude user interviews on what people dream AI could enable versus what they worry it might harm.

Source: Pew Research Center

Research Brief

What our analysis found

Anthropic claims to have conducted the largest qualitative study of its kind, inviting all Claude.ai account holders to participate in a conversational interview over one week in December 2025. Out of 112,846 submitted interviews, a total of 80,508 met quality thresholds and were analyzed, with responses spanning 159 countries and 70 languages. The results, published on March 18, 2026, paint a sweeping portrait of how people use AI, what they hope it will become, and what keeps them up at night.

The most common aspiration was professional excellence (18.8%), followed by personal transformation (13.7%) and life management (13.5%). On the benefits side, 32% cited productivity gains as where AI has already delivered, though a notable 18.9% said AI hasn't delivered meaningful results yet. Meanwhile, 81% of respondents said AI has taken at least one step toward their broader vision. When it came to fears, unreliability topped the list at 26.7%, followed by concerns about jobs and the economy (22.3%) and threats to autonomy and agency (21.9%). Respondents averaged 2.3 distinct concerns each, with only 11% expressing none at all.

The study also revealed a striking tension between perceived benefits and harms. Emotional support and dependence showed the strongest co-occurrence (φ ≈ 0.33), meaning the people who most valued AI's emotional support were roughly three times more likely than average to also worry about becoming dependent on it. Geographically, the sample skewed heavily toward North America (29.5%) and Western Europe (19.0%), while sub-Saharan Africa (2.1%) and Central Asia (0.4%) were far less represented — a demographic imbalance Anthropic itself acknowledged as a limitation.

Fact Check

Evidence from both sides

Supporting Evidence

Official report confirms the numbers

Anthropic's published feature page and interactive report state that 80,508 interviews were analyzed from a pool of 112,846 submissions, collected across 159 countries and 70 languages during a one-week window in December 2025.

Detailed methodology appendix

A publicly available PDF appendix documents the one-week collection window, filtering criteria, response quality breakdowns (97.6% substantive answers on the hopes question), classifier validation reaching at least 90% agreement with human coders, and de-identification procedures.

Credible comparators support the "largest" claim

Anthropic's own footnote cites the USC Shoah Foundation Visual History Archive (approximately 55,000–59,893 interviews) and the World Bank's "Voices of the Poor" project (approximately 60,000 interviewees) — both smaller than the 80,508 analyzed responses.

Prior pilot demonstrated the tool's viability

Anthropic's December 4, 2025 blog post introducing the Anthropic Interviewer described a pilot of 1,250 professional interviews with publicly released transcripts, establishing that the AI-led interview tool existed and could scale to much larger numbers.

Contradicting Evidence

"Largest of its kind" is a self-assessed claim

Anthropic frames the superlative with the qualifier "we believe" and bases it on its own comparison to well-known projects. No independent body has audited or verified that no larger qualitative interview study exists anywhere in the world.

Significant sampling bias acknowledged

Respondents were existing Claude.ai users who opted in, meaning the sample skews toward people already favorably inclined toward AI. Nearly half of respondents came from just North America (29.5%) and Western Europe (19.0%), while entire regions like sub-Saharan Africa (2.1%) and Central Asia (0.4%) were barely represented, limiting the study's generalizability.

AI-led interviewing and AI-powered coding raise methodological questions

Both the interviews and the analysis were conducted using Claude itself, creating a circular dynamic where Anthropic's own product gathered and interpreted data about Anthropic's own product. While classifiers were hand-validated, the overall framework lacks the independent oversight typical of large-scale academic qualitative research.

Question ordering and dropout effects

Anthropic acknowledged that later questions had higher dropout rates — the "not reached" rate climbed from 0.9% on the first main question to 9.7% on the values question — and that the ordering of benefit and harm questions may have inflated the co-occurrence of positive and negative themes.

Report an Issue

Found something wrong with this article? Let us know and we'll look into it.