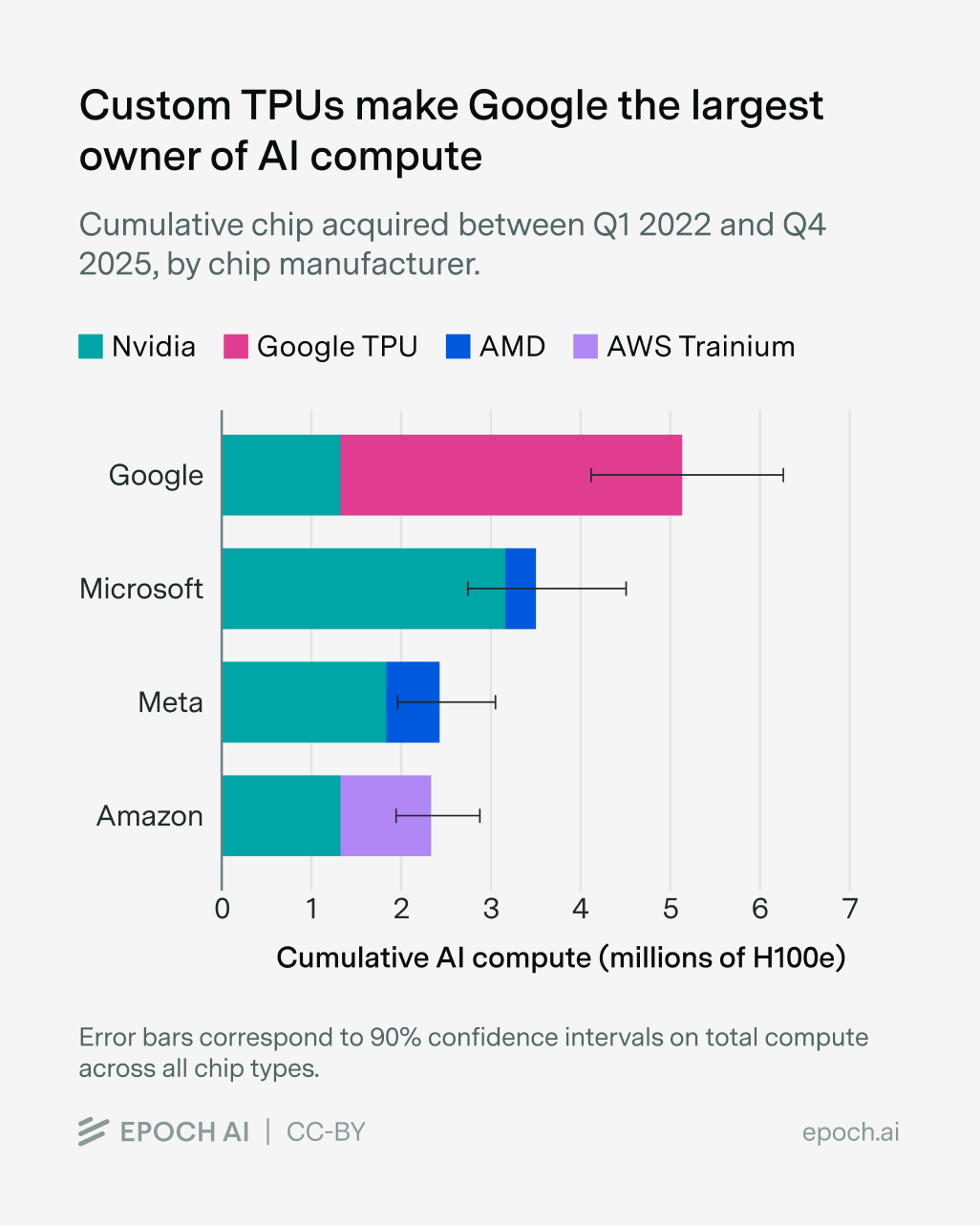

Wow. Apparently Google controls ~25% of global AI compute, with ~3.8 million TPUs and 1.3 million GPUs.

A bar chart from Epoch AI (Apr 7, 2026) showing cumulative AI compute (millions of H100-equivalents) owned by Google, Microsoft, Meta, and Amazon as of Q4 2025, broken down by chip manufacturer. It clearly shows Google leading (≈5M H100e) with the majority of that capacity coming from Google TPUs — directly supporting the tweet’s claim that Google controls roughly 25% of global AI compute driven by millions of TPUs and a smaller share of GPUs.

Source: Epoch AI (Epoch AI Substack / epoch.ai)

Research Brief

What our analysis found

Google has emerged as the single largest owner of AI computing power on the planet, controlling an estimated 25% of global AI compute capacity as of Q4 2025, according to analysis from Epoch AI. The tech giant's arsenal includes approximately 3.8 million custom Tensor Processing Units (TPUs) and 1.3 million GPUs, totaling roughly 5.1 million AI accelerators deployed across its global data center network. What sets Google apart from rival hyperscalers is its heavy reliance on its own custom-designed TPU chips rather than NVIDIA hardware, a strategic bet that has positioned it at the top of the AI infrastructure hierarchy.

The competitive landscape is fierce but Google holds a clear lead. Microsoft trails with an estimated 3.4 million accelerators (mostly NVIDIA GPUs), followed by Amazon at 2.5 million and Meta at 2.3 million. Collectively, five hyperscalers — Amazon, Google, Meta, Microsoft, and Oracle — now control a staggering 71% of the world's cumulative AI compute. The total available computing capacity from AI chips has been growing at approximately 3.3 times per year since 2022, effectively doubling every seven months, underscoring the breakneck pace of the global AI infrastructure buildout.

Google's parent company Alphabet is doubling down on this advantage, planning to invest between $175 billion and $185 billion in capital expenditures in 2026 — nearly double the $91.4 billion spent in 2025. The company recently unveiled its eighth-generation TPU chips, broke ground on 11 new cloud regions and data center campuses in 2024, and is building a diversified chip supply chain with four design partners including Broadcom, MediaTek, Marvell, and Intel. Google Cloud revenue surged 48% year-over-year in Q4 2025, driven largely by enterprise AI demand.

Fact Check

Evidence from both sides

Supporting Evidence

Epoch AI's direct analysis confirms the figures

The Epoch AI "AI Chip Owners hub," which tracks chip acquisitions from Q1 2022 through Q4 2025, is the primary source for the estimates of 25% global AI compute share, 3.8 million TPUs, and 1.3 million GPUs attributed to Google. Multiple news outlets and industry discussions have cited these same figures.

Standardized methodology supports cross-comparison

Epoch AI converts different chip models — including Google TPUs, NVIDIA and AMD GPUs, and Amazon's Trainium/Inferentia chips — into standardized "H100 equivalents" for comparable measurement. Under this framework, a seventh-generation Google TPU is calculated at 2.3 times the computational power of an H100, which contributes significantly to Google's leading share.

Corporate disclosures and analyst reports underpin the data

Epoch AI's methodology relies on compiling corporate earnings reports, public disclosures, and industry analyst estimates, providing a transparent if imperfect basis for the 25% figure.

Massive capital expenditure corroborates scale

Alphabet's planned 2026 capital expenditure of $175 billion to $185 billion, alongside $91.4 billion spent in 2025, is consistent with the infrastructure scale implied by owning over 5 million AI accelerators and expanding aggressively.

Contradicting Evidence

NVIDIA dominates chip sales, complicating the ownership narrative

While Google leads in cumulative AI compute ownership, NVIDIA supplied nearly two-thirds of all AI compute capacity in chip sales (measured in H100 equivalents) in Q4 2025. Google ranked a distant second as a chip supplier, meaning the ownership lead partly reflects internal consumption rather than market dominance.

Data coverage limitations introduce uncertainty

Epoch AI acknowledges that its data coverage varies by manufacturer — NVIDIA data begins in 2022 while Google data starts in 2023 — and the estimates rely on available disclosures rather than complete, independently verified inventories from all entities. The conversion to H100 equivalents is a useful but inherently imperfect standardization method that can shift percentage outcomes.

NVIDIA's CEO has questioned Google's TPU performance claims

Jensen Huang has publicly expressed skepticism about Google's TPU efficiency and performance metrics, pointing out that Google does not submit its custom AI chips to independent benchmarking tests. He also suggested that a significant portion of TPU demand is concentrated in a single customer, Anthropic, rather than reflecting broad market validation.

Concentrated customer base raises questions about organic demand

Anthropic has committed to acquiring up to one million of Google's Ironwood TPUs, and Meta has a rental agreement, suggesting that a relatively small number of high-profile clients may account for a disproportionate share of Google's deployed TPU fleet rather than widespread enterprise adoption.

Report an Issue

Found something wrong with this article? Let us know and we'll look into it.