Kai Trump on AI: "In high school it’s bad, like everyone uses ChatGPT for essays and everything and the teachers get mad. I think honestly schools should start teaching people how to use ChatGPT to their advantage." https://t.co/6E8wviE0gi

)](https://www.pewresearch.org/wp-content/uploads/sites/20/2025/01/SR_25.01.15_teens-chatgpt_1.png?w=400)

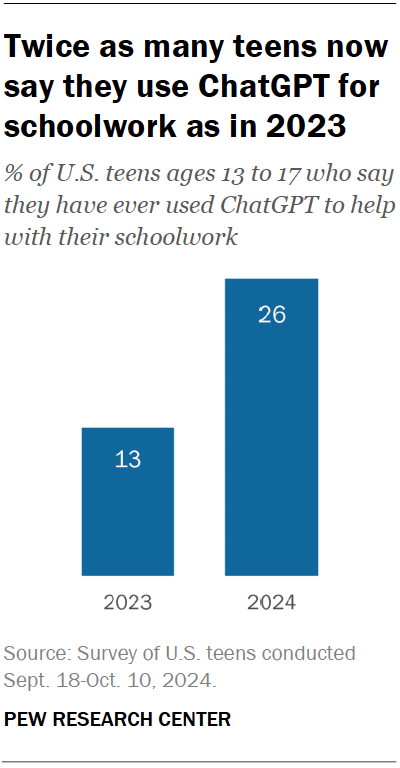

Bar chart showing the share of U.S. teens (ages 13–17) who report having ever used ChatGPT for schoolwork: 13% in 2023 vs. 26% in 2024, illustrating a rapid increase in student use — directly relevant to the tweet’s claim that ChatGPT use is widespread among high-schoolers and underlies teacher concerns about classroom use. ([pewresearch.org](https://www.pewresearch.org/wp-content/uploads/sites/20/2025/01/SR_25.01.15_teens-chatgpt_1.png?w=400))

Source: Pew Research Center

Research Brief

What our analysis found

Kai Trump, granddaughter of former President Donald Trump, sparked a viral conversation about AI in education during her January 2026 appearance on Logan Paul's IMPAULSIVE podcast. Her observation that "everyone uses ChatGPT for essays" is well supported by data: according to the College Board's May 2025 survey, 84% of high school students now use generative AI for schoolwork, up from 79% just months earlier in January 2025. ChatGPT remains the dominant tool, with 69% of high school students reporting they use it specifically for school assignments and homework.

Her call for schools to teach students how to use AI productively rather than simply condemning it aligns with a broader shift already underway among educators and administrators. More than 85% of school administrators now view AI literacy as very or somewhat valuable for high school education, and teacher adoption of AI tools jumped from 46% in the 2023-24 school year to 60% in 2024-25. Nearly 6 in 10 parents of high school students agree it is better for their children to use generative AI for schoolwork than to avoid it entirely. Yet a significant policy vacuum persists: over 79% of U.S. educators report their districts still lack clear guidelines on AI use in classrooms.

The debate is far from settled, however. While half of high school students say they use AI for brainstorming, editing, and research, only 18% of teens consider it acceptable to use ChatGPT for writing full essays — suggesting students themselves draw ethical lines around the technology. Organizations like the AI Education Project and Quill.org are stepping in for the 2025-26 school year with free, year-long AI literacy curricula for grades 8-12, signaling that the education system is beginning to catch up to the reality Kai Trump described.

Fact Check

Evidence from both sides

Supporting Evidence

Widespread student adoption makes prohibition impractical

With 84% of high school students already using generative AI for schoolwork according to the College Board's May 2025 data, banning the technology outright appears increasingly untenable. Teaching responsible use may be more realistic than enforcing abstinence.

Preparation for an AI-driven workforce

Multiple education policy sources emphasize that AI literacy is essential to prepare students for a rapidly changing job market. Integrating AI instruction equips young people with skills they will need in virtually every professional field.

Educators themselves are embracing AI

Teacher adoption of AI tools grew from 46% to 60% in a single school year, and 18% more teachers found AI helpful in 2025 than in 2024, indicating that the professional consensus is shifting toward integration rather than rejection.

Administrator support for AI literacy

More than 85% of school administrators view students learning to use AI tools as very or somewhat valuable, signaling institutional readiness for the kind of curriculum shift Kai Trump advocates.

AI can enhance learning outcomes

Research shows AI tools can support personalized learning, provide immediate feedback, adapt materials for diverse learners, and help educators automate administrative tasks — freeing teachers to focus on instruction and student relationships.

Critical thinking development

Teaching students to use AI responsibly includes training them to critically evaluate AI-generated content, understand its limitations, and identify bias or inaccuracies — skills that strengthen rather than undermine intellectual rigor.

Equity considerations favor integration

Embedding AI literacy into daily classroom instruction ensures all students develop these capabilities regardless of home technology access, helping to close demographic and socioeconomic gaps in digital skills.

New curricula are already being deployed

The AI Education Project and Quill.org are rolling out a free, year-long AI literacy curriculum for grades 8-12 in the 2025-26 school year, demonstrating that practical frameworks for classroom integration now exist.

Contradicting Evidence

Academic integrity concerns remain serious

A major objection to normalizing AI in schools is that tools like ChatGPT can facilitate cheating, with some AI-generated work sophisticated enough to bypass plagiarism detection systems, potentially undermining the value of assessments.

Risk of eroding foundational skills

Critics warn that over-reliance on AI for writing, research, and problem-solving may diminish students' ability to think critically, develop original ideas, and build core academic competencies on their own.

Students themselves see ethical limits

Only 18% of teens consider it acceptable to use ChatGPT for writing essays, suggesting that even among young people there is a recognized boundary between helpful assistance and inappropriate dependence.

A massive policy vacuum persists

Over 79% of U.S. educators report their districts lack clear AI policies, and more than 50% of teachers say their schools have no formal guidance at all — meaning integration without guardrails could lead to inconsistent and potentially harmful implementation.

Bias and misinformation risks

AI-generated content can be inaccurate, incomplete, or reflect embedded biases in training data, posing real dangers for students who may lack the experience to critically evaluate what the tools produce.

Privacy and data security threats

AI platforms often collect personal data from users, raising significant concerns about student privacy, data security, and compliance with protections like FERPA and COPPA.

Teacher readiness is a bottleneck

Effective AI integration requires substantial professional development, time, and institutional support that many educators currently do not have. Without adequate training, pushing AI into classrooms risks overwhelming teachers and producing poor outcomes.

Potential harm to teacher-student relationships

Some educators worry that increased reliance on AI tools could weaken meaningful human interactions in the classroom, reducing the mentorship and personal connection that are central to effective teaching.

Report an Issue

Found something wrong with this article? Let us know and we'll look into it.