JUST IN: Trump is reportedly considering an executive order requiring vetting of new AI models before they are released.

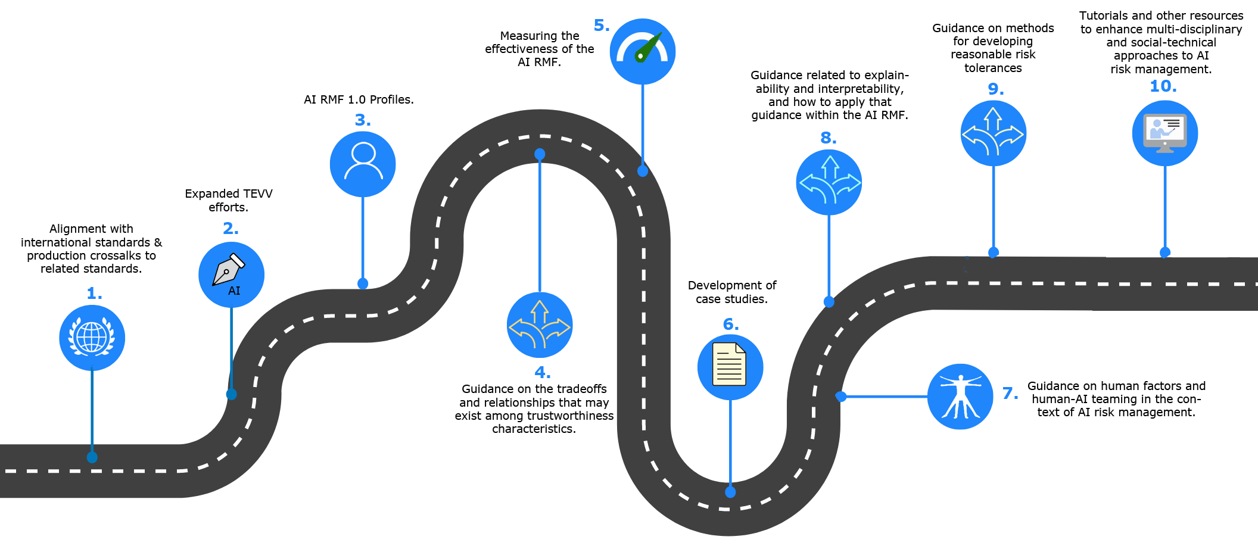

NIST’s AI RMF roadmap infographic visualizes the framework’s priorities and explicitly calls out “Expanded TEVV efforts” (Test, Evaluate, Verify, Validate) and measuring effectiveness — a concrete depiction of the kinds of testing/vetting steps a government review of new models before public release would rely on.

Source: National Institute of Standards and Technology (NIST)

Research Brief

What our analysis found

The Trump administration is reportedly considering an executive order that would require government vetting of new AI models before their public release, according to a New York Times report from early May 2026. The proposed order would establish an "AI working group" composed of tech executives and government officials to develop oversight procedures, with agencies such as the National Security Agency (NSA), the Office of the National Cyber Director, and the Director of National Intelligence under consideration to oversee the review process. White House staff reportedly briefed leaders from Anthropic, Google, and OpenAI on the plans in the past week.

The immediate catalyst for this policy shift is largely attributed to Anthropic's Mythos model, which demonstrated advanced capabilities in discovering thousands of critical software vulnerabilities and was deemed "too dangerous for public release" by the company. Anthropic has withheld Mythos from the public but launched "Project Glasswing" to allow a select group of technology and cybersecurity firms to use it for defensive purposes. The proposed system would reportedly grant the government early access to models without necessarily blocking their public release.

This potential move represents a dramatic reversal from the administration's earlier deregulatory stance. The discussions come amid a leadership shake-up in White House AI policy, with former AI czar David Sacks departing in March 2026 and White House Chief of Staff Susie Wiles and Treasury Secretary Scott Bessent reportedly taking more active roles. The discussions remain in early stages, with no finalized proposal or set timeline.

Fact Check

Evidence from both sides

Supporting Evidence

Multiple credible outlets corroborate the report

Publications including Tom's Hardware, Mashable, Forbes, Futurism, The Economic Times, The Hindu, and Seeking Alpha all cite the New York Times as reporting on the Trump administration's consideration of this executive order in early May 2026.

Briefings with major AI companies reportedly took place

White House staff reportedly briefed executives from Anthropic, Google, and OpenAI on the proposed plans, suggesting concrete discussions have moved beyond mere internal deliberation.

Anthropic's Mythos model provides a clear policy trigger

The model's widely reported ability to find thousands of critical software vulnerabilities, and Anthropic's own decision to withhold it from public release as "too dangerous," is consistently cited across sources as the catalyst prompting this regulatory reconsideration.

Proposed institutional framework is detailed and specific

Reports describe concrete structural elements including the creation of an AI working group, the involvement of specific agencies like the NSA and the Office of the National Cyber Director, and a mechanism granting government early access to models, lending credibility to the seriousness of the discussions.

Contradicting Evidence

The White House has not confirmed the executive order

A White House official responded to inquiries by stating that "Any policy announcement will come directly from the president" and characterized reporting on potential executive orders as "speculation," stopping short of either confirming or denying the plans.

This contradicts the administration's established deregulatory record

Upon taking office in January 2025, Trump revoked Biden's Executive Order 14110 on AI safety and issued his own order titled "Removing Barriers to American Leadership in Artificial Intelligence." The administration also released an AI Action Plan in July 2025 emphasizing aggressive deregulation and framing AI as a matter of national competitiveness.

Industry executives have raised concerns about stifling innovation

Some company executives have expressed worry that a pre-release government review process could slow the pace of AI development and risk eroding the competitive advantage of American AI firms on the global stage.

Discussions remain preliminary with no finalized proposal

Reports emphasize that these conversations are in early stages with no set timeline, meaning the executive order may be significantly altered, scaled back, or abandoned before any official announcement is made.

Report an Issue

Found something wrong with this article? Let us know and we'll look into it.