April was a pretty strong month for LLM releases: - Gemma 4 - GLM-5.1 - Qwen3.6 - Kimi K2.6 - DeepSeek V4 All are now added to the LLM Architecture Gallery. More details once I am fully back in May! https://t.co/HDYbWi2pcc

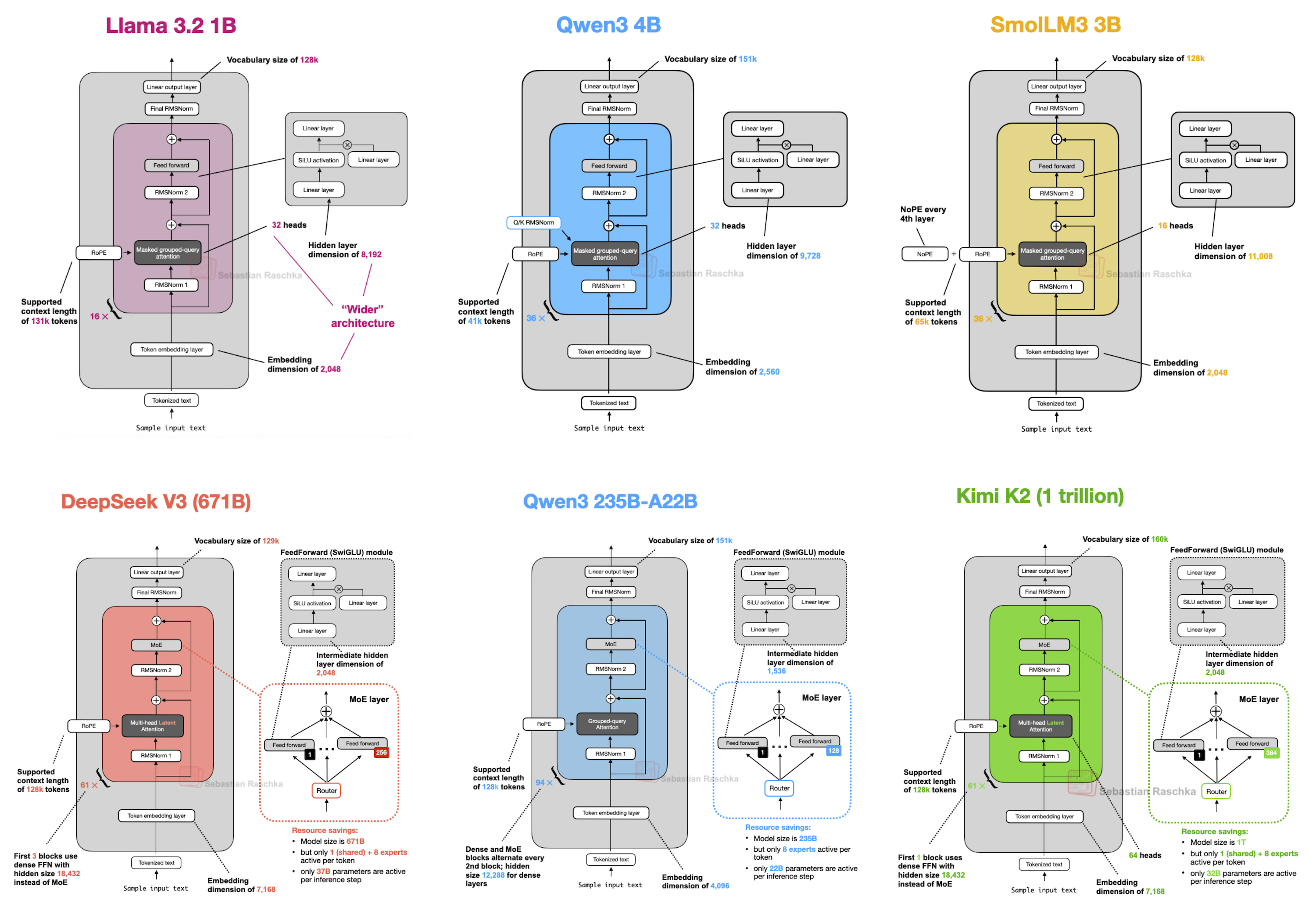

A contact-sheet style infographic of LLM architecture diagrams from the LLM Architecture Gallery (by Sebastian Raschka). It visually presents architecture cards for multiple models (e.g., Qwen3, Kimi K2, DeepSeek, Llama variants) and supports the tweet’s point that recent LLM releases have been added to the gallery by showing the gallery’s per‑model technical diagrams.

Source: Sebastian Raschka / Ahead of AI (magazine.sebastianraschka.com)

Research Brief

What our analysis found

April 2026 proved to be one of the most consequential months in recent memory for large language model releases, with five major models dropping in rapid succession from both Western and Chinese AI labs. The wave began on April 2 with Google DeepMind's Gemma 4, a family of open multimodal models available in four sizes up to 31B Dense, released under the Apache 2.0 license. Just days later, Zhipu AI unveiled GLM-5.1, a 754 billion parameter Mixture-of-Experts model under the MIT license, boasting programming capabilities that reportedly rival Claude Opus 4.6.

Alibaba Cloud rolled out the Qwen3.6 series throughout the month, starting with Qwen3.6-Plus via API on April 1 and followed by open-weight models on Hugging Face through April 22. Moonshot AI's Kimi K2.6 arrived around April 19-20 with 1 trillion total parameters and a massive 262,144 token context window. DeepSeek closed out the month on April 24 with two variants of DeepSeek V4, headlined by the V4-Pro at 1.6 trillion parameters and a landmark 1 million token context window achieved through a hybrid attention architecture.

All five models employ Mixture-of-Experts architectures and are released under permissive open-source or open-weight licenses, reflecting a clear industry trend toward powerful, accessible AI. Sebastian Raschka's LLM Architecture Gallery, a widely referenced visual catalog of model designs, confirmed the addition of all five models, underscoring the density of innovation packed into a single month.

Fact Check

Evidence from both sides

Supporting Evidence

All five models confirmed released in April 2026

Research verifies that Gemma 4 (April 2), GLM-5.1 (April 6-7), Qwen3.6 (April 1-22), Kimi K2.6 (April 19-20), and DeepSeek V4 (April

all launched within the month of April 2026, fully supporting the tweet's centra...

all launched within the month of April 2026, fully supporting the tweet's central claim.

LLM Architecture Gallery actively updated

The gallery maintained by Sebastian Raschka was confirmed to have received updates in March and April 2026, with specific mentions of DeepSeek, Qwen, Kimi, and Gemma architectures, corroborating the claim that all five were added.

Significant scale and capability across all releases

The models represent genuinely major releases rather than minor updates, with parameter counts reaching 1.6 trillion (DeepSeek V4-Pro) and 1 trillion (Kimi K2.6), context windows up to 1 million tokens, and competitive benchmark performances such as Gemma 4's 31B model reaching the number 3 spot on the Arena AI text leaderboard for open models.

Open licensing across the board

All five models were released under permissive open-source licenses including Apache 2.0, MIT, and modified MIT, reinforcing that these were publicly significant releases accessible to the broader developer community.

Multiple independent sources confirm details

Release dates, parameter counts, and architectural choices such as Mixture-of-Experts designs were corroborated across multiple sources for each model, lending high confidence to the factual accuracy of the tweet.

Contradicting Evidence

GLM-5.1 timeline ambiguity

One source references a significant GLM 5.1 release in "spring 2025," which may indicate an earlier announcement or capability preview before the confirmed April 2026 model weight release, introducing some ambiguity about whether the April date represents the true initial launch or a subsequent open-weight release.

Qwen3.6 benchmarks lack independent verification

Some performance benchmarks for Qwen3.6-35B-A3B are self-reported by Alibaba, and independent third-party reproductions were not available at the time of review, meaning the model's claimed capabilities should be treated with some caution.

DeepSeek V4 experienced a delayed launch

Reports from January 2026 indicated DeepSeek V4 was anticipated for mid-February 2026, suggesting the April 24 release represented a notable delay from initial projections, which slightly complicates the narrative that April was organically dense with releases rather than partly a result of scheduling slippage.

Gallery updates not yet fully documented

While the LLM Architecture Gallery is confirmed to be actively maintained and updated for recent models, the research specifically references architecture figures for predecessors like Gemma 3 and Qwen3-Next, and the tweet itself acknowledges that "more details" would follow in May, suggesting the gallery entries may not yet have been complete at the time of posting.

Report an Issue

Found something wrong with this article? Let us know and we'll look into it.