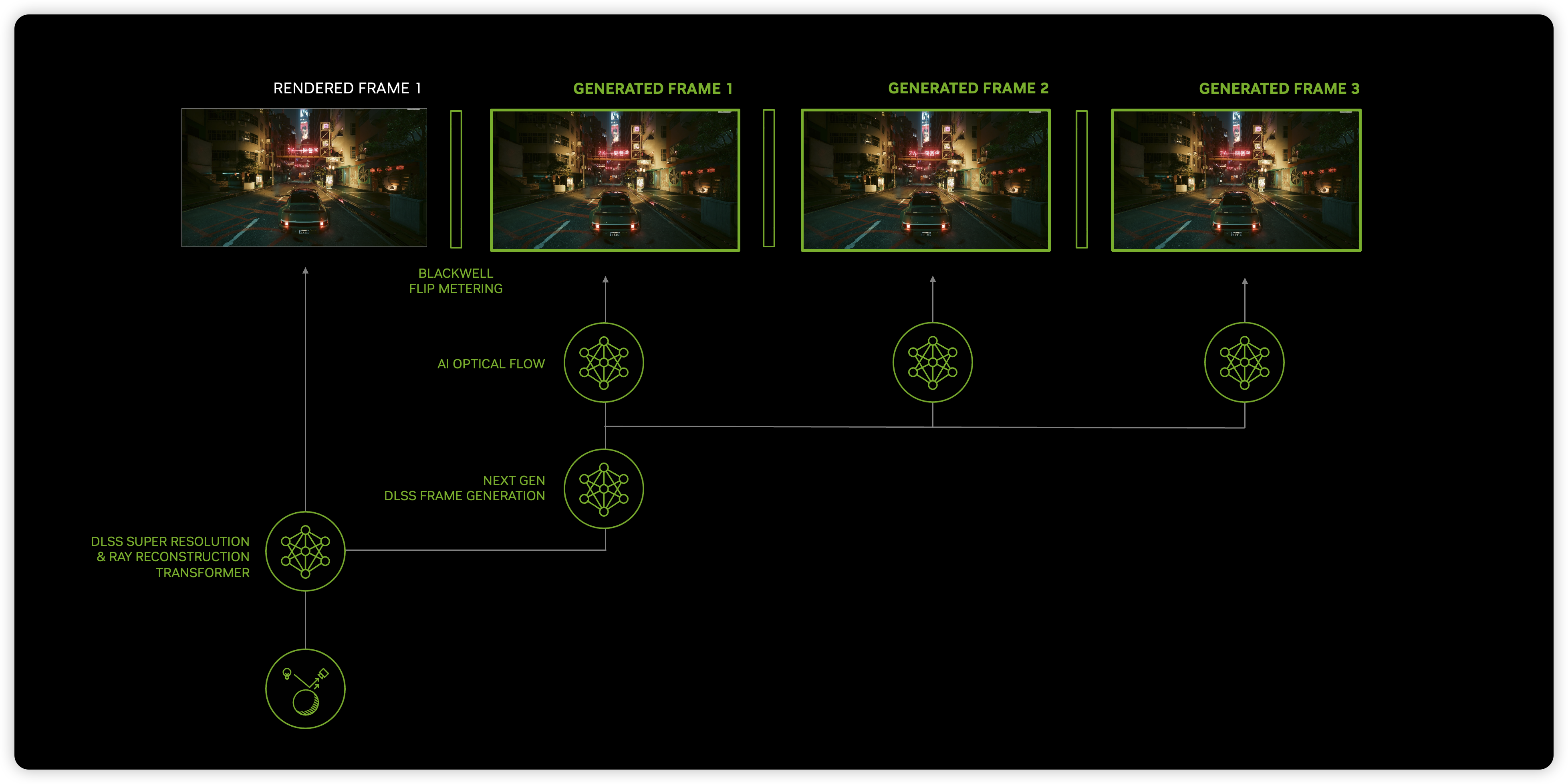

Nvidia has confirmed that the new DLSS 5 algorithm is redrawing games based on a 2D image input and motion data. https://t.co/hLLYfXG2bU https://t.co/bLsJ3osyzL

A labeled model diagram from NVIDIA Research showing a rendered frame feeding into DLSS frame-generation and AI optical-flow components to produce multiple generated frames. The graphic explicitly visualizes DLSS taking the rendered (2D) frame and motion/flow information as inputs to generate/redraw frames in real time, directly illustrating the behavior described in the tweet.

Source: NVIDIA Research (research.nvidia.com)

Research Brief

What our analysis found

Nvidia officially unveiled DLSS 5 during its GTC keynote on March 16, 2026, describing the technology as a "real-time neural rendering" model designed to add photorealistic lighting and materials to each game frame. According to coverage from Tom's Hardware and other outlets, the system operates by taking a game engine's rendered frame (source color) and motion vectors as its two primary inputs, then using an AI model to produce a visually enhanced output in real time. Nvidia has stated the feature is slated to ship in Fall 2026.

The claim that DLSS 5 effectively "redraws" games from a 2D image and motion data gained further traction after YouTuber Daniel Owen published an email exchange with Jacob Freeman, an Nvidia DLSS evangelist, who confirmed that "DLSS 5 only takes the rendered frame and motion vectors as inputs" and that "materials are inferred from the rendered frame." This revelation, reported by GamesRadar on March 20, 2026, led many in the gaming community to characterize the technology as essentially a sophisticated AI filter applied over screen-space imagery rather than a deeper integration with 3D scene data.

Early demonstrations of DLSS 5 required significant hardware muscle — preview builds were reportedly run on two RTX 5090 GPUs, with one card rendering the game and the other dedicated to running the neural model. Nvidia has acknowledged this setup and says the model will be optimized for single-GPU operation before its public release. Meanwhile, Nvidia and its developer partners have emphasized that studios retain "artistic control" through adjustable parameters including intensity, color grading, masking, and blending settings.

Fact Check

Evidence from both sides

Supporting Evidence

Tom's Hardware GTC coverage confirms 2D inputs

In its March 16, 2026 report on Nvidia's GTC keynote, Tom's Hardware summarized Nvidia's own statements that DLSS 5 "operates in real time, using a game engine's motion vectors and source color as inputs for its AI model," directly supporting the claim that the system works from a rendered 2D frame plus motion data.

Nvidia engineer email confirms limited inputs

GamesRadar reported on March 20, 2026 that Nvidia DLSS evangelist Jacob Freeman told YouTuber Daniel Owen via email that "DLSS 5 only takes the rendered frame and motion vectors as inputs" and that "materials are inferred from the rendered frame," explicitly confirming the 2D-image-plus-motion-data description.

PC Gamer and Digital Foundry hands-on descriptions

Multiple hands-on previews and reporting from PC Gamer and summaries of Digital Foundry's analysis describe DLSS 5 as operating on "screen-space" frames guided by motion vectors, reinforcing that the technology processes the already-rendered 2D image rather than working directly with 3D scene geometry.

Nvidia's own "anchored" framing

Nvidia's official messaging describes the DLSS 5 output as "anchored" to the source frame via motion vectors, language consistent with the claim that the neural model operates on 2D input data rather than accessing the full 3D pipeline directly.

Contradicting Evidence

Jensen Huang's "fusing" language implies deeper 3D integration

During the GTC Q&A, Nvidia CEO Jensen Huang described DLSS 5 as "fusing controllability of the geometry and textures and everything about the game with generative AI," phrasing that suggests the system may have closer ties to 3D scene data than a simple 2D filter. This ambiguous language has fueled debate about whether the technology accesses more engine information than the confirmed two inputs.

Developer artistic controls suggest more than a passive filter

Nvidia and partner studios have publicly stated that developers retain significant "artistic control" over DLSS 5 through adjustable parameters such as intensity sliders, color grading, masking, and blending options. This level of developer-side integration nuances the characterization of DLSS 5 as merely "redrawing" from a flat image, suggesting a more collaborative pipeline between the engine and the AI model.

Nvidia describes the approach as "3D-guided neural rendering"

Rather than calling DLSS 5 a 2D image filter, Nvidia's official terminology is "3D-guided neural rendering," a label that implies the motion vectors provide meaningful 3D spatial context — not just temporal coherence — to the model's reconstruction process, which complicates the framing that it works purely from a 2D image.

Report an Issue

Found something wrong with this article? Let us know and we'll look into it.