Four years ago I presented my research on multi-cluster Kubernetes orchestration at KubeCon. We built mck8s to solve distributed workload scheduling at the edge. Today, that architecture is the exact missing infrastructure layer for agentic AI. Centralized cloud endpoints are becoming a bottleneck for autonomous systems. Privacy constraints, offline capabilities, and latency mean agents must increasingly run on local models directly at the edge. But orchestrating swarms of autonomous agents across distributed edge locations is a massive infrastructure problem. The multi-cluster federation and decentralized scheduling patterns we designed for mck8s solve this exact distribution problem. The edge is no longer just for IoT. It is the deployment target for distributed AI agents.

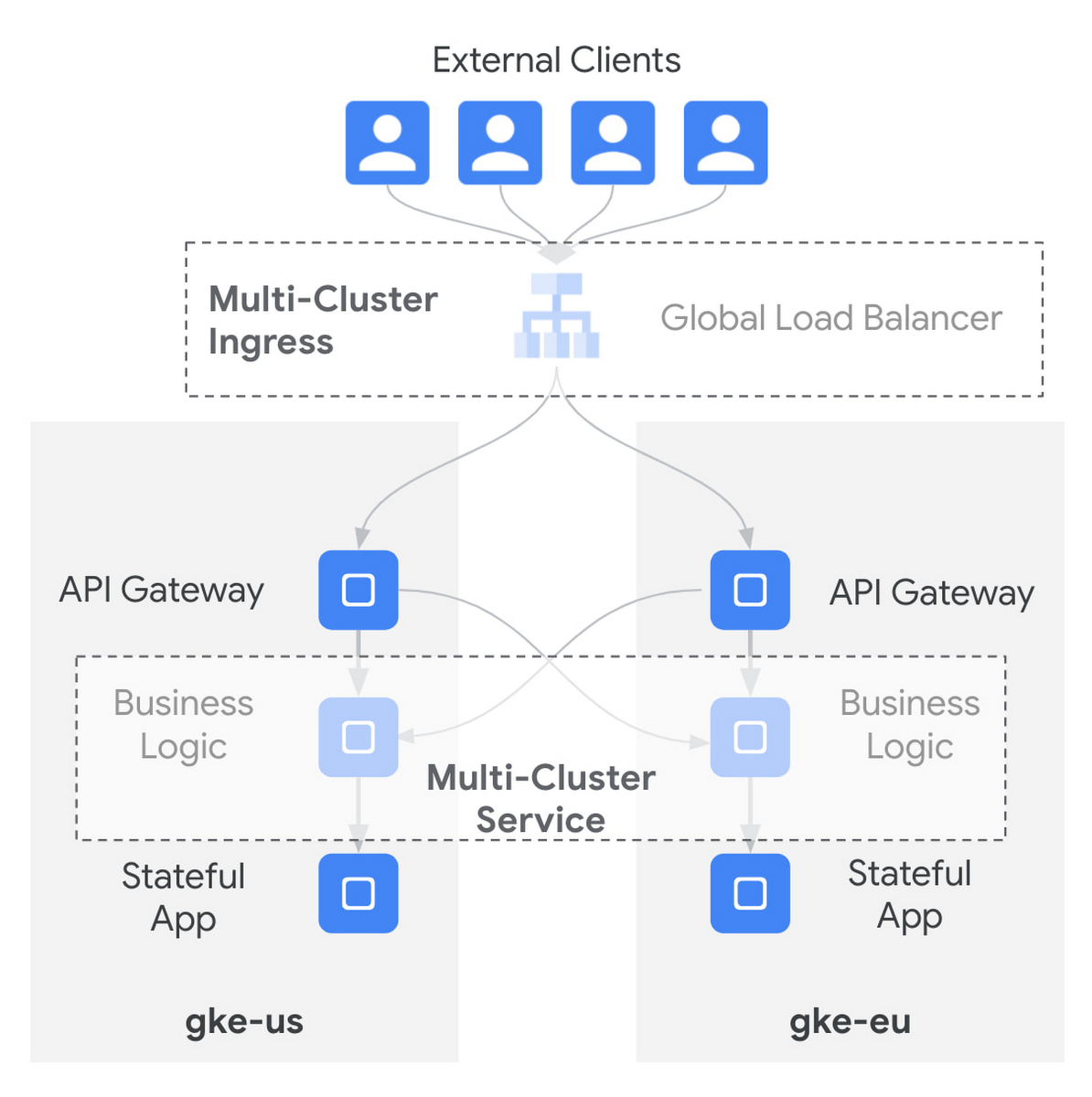

Architecture diagram showing Multi-Cluster Ingress and Multi-Cluster Services: a global load balancer and API gateways routing traffic to multiple GKE clusters (gke-us, gke-eu) with cross-cluster service endpoints. This directly illustrates multi-cluster federation and edge-aware routing patterns (proximity-based routing, cross-cluster services) that enable distributing workloads and low-latency local execution—the same infrastructure patterns your mck8s/agentic AI use case relies on for orchestrating autonomous agents at the edge.

Source: Google Cloud Blog / Google Cloud

Research Brief

What our analysis found

A resurfaced discussion around multi-cluster Kubernetes orchestration is gaining traction as the AI industry grapples with a fundamental infrastructure challenge: where and how to run autonomous AI agents at scale. The original mck8s platform, presented at the 2021 International Conference on Computer Communications and Networks (ICCCN), demonstrated that its multi-cluster scheduling could reduce pending pods to just 6%, compared to 65% for standard Kubernetes Federation under identical workloads. Now, proponents argue this distributed architecture maps directly onto the emerging needs of agentic AI systems that must operate across geographically dispersed edge locations.

The timing of this argument is significant. Industry forecasts project that 75% of enterprise data will be created and processed at the edge by 2025, while centralized cloud providers face what analysts describe as a mounting "compute bottleneck" driven by insatiable AI demand. AI agents are particularly resource-intensive, generating up to 25 times more network traffic than a standard chatbot, which transforms network loads from predictable bursts into relentless, high-intensity streams. These dynamics are forcing a rethink of where AI workloads should live, with latency-sensitive applications like autonomous vehicles and industrial safety systems demanding sub-millisecond response times that centralized clouds often cannot guarantee.

However, the leap from research prototype to production-ready infrastructure for agentic AI is far from trivial. Agentic AI projects face a projected 40% cancellation rate by the end of 2027, frequently due to underestimated operational complexity and cost. Meanwhile, mck8s itself was described as "not stable" and "not production ready" as recently as 2023, and it cannot run on managed Kubernetes offerings like GKE due to its need for direct control plane access. The vision of orchestrating swarms of autonomous agents at the edge is compelling, but the gap between architectural alignment and deployable reality remains substantial.

Fact Check

Evidence from both sides

Supporting Evidence

Centralized cloud is hitting real bottlenecks for AI workloads

Centralized cloud providers are struggling to meet the processing demands of AI, facing sky-high costs from specialized GPU hardware and significant data transfer fees. Real-time AI applications such as autonomous vehicles require immediate responses that are hampered by the physical distance to centralized data centers, and analysts have called the current centralized model "unsuitable for mass AI adoption."

Edge computing is validated as a critical AI deployment target

Forecasts indicate that 75% of enterprise data will be created and processed at the edge by 2025. Edge computing directly addresses privacy, latency, reliability, and bandwidth concerns that are paramount for autonomous agent operation.

Kubernetes is already recognized as foundational for edge AI

Kubernetes is widely described as "quickly becoming the operating system for Edge AI" due to its modularity, self-healing capabilities, and flexibility. Multi-cluster Kubernetes architectures specifically improve availability, scalability, workload isolation, and location-based infrastructure segmentation — all essential for distributed edge deployments.

mck8s demonstrated measurable improvements in distributed scheduling

In geo-distributed testbed evaluations, mck8s reduced pending pods to 6% compared to 65% for Kubernetes Federation, validating its core claim of superior resource utilization and elasticity across multiple clusters.

Agentic AI demands distributed, low-latency infrastructure by design

Agentic AI relies on dense, low-latency interconnects across distributed sites rather than massive centralized GPU clusters. AI agents generate up to 25 times more network traffic than chatbots, and multi-agent systems require decentralized or hierarchical orchestration to prevent single points of failure and ensure scalability.

Multi-agent orchestration is an acknowledged infrastructure challenge

Experts recognize that single-agent architectures quickly become bottlenecks for complex real-world problems, and that coordinating communication, shared state, and workflow execution across distributed autonomous agents is a critical and unsolved infrastructure problem.

Contradicting Evidence

mck8s is not production-ready

As of approximately 2023, the mck8s GitHub repository explicitly stated the project was "in active development and not stable, and thus not production ready." Describing it as the "exact missing infrastructure layer" overstates the maturity of a research prototype that has not been validated in production environments.

Managed Kubernetes services are excluded

mck8s cannot run on managed Kubernetes offerings such as Google Kubernetes Engine (GKE) because its components require direct access to the Kubernetes control plane. This is a significant practical limitation given that most enterprises rely on managed services, narrowing the platform's real-world applicability.

Agentic AI faces severe operational challenges beyond orchestration

Agentic AI projects face a projected 40% cancellation rate by the end of 2027 due to underestimated operational complexity and cost. Infrastructure orchestration is only one dimension of this challenge — issues like data fragmentation, framework instability, and unpredictable demand patterns represent additional hurdles that multi-cluster Kubernetes alone does not solve.

The "exact" framing overstates architectural alignment

While mck8s was designed for distributed workload scheduling, it was built for general containerized applications, not specifically for the unique requirements of AI agent coordination such as shared cognitive state, inter-agent communication protocols, and dynamic tool access. Significant adaptation would be needed to handle agentic AI workflows.

Competing infrastructure approaches exist

The edge AI and distributed AI orchestration space is rapidly evolving with multiple competing frameworks and platforms. Presenting mck8s as the singular missing layer ignores alternative solutions from major cloud providers and open-source communities that are purpose-built for AI workloads at the edge.

Edge deployment of large AI models remains technically constrained

Running capable language models and autonomous agents on edge hardware is still limited by compute, memory, and power constraints at the edge. The orchestration layer is necessary but not sufficient — the fundamental hardware limitations of edge devices remain a barrier to deploying sophisticated AI agents outside centralized or hybrid cloud environments.

Report an Issue

Found something wrong with this article? Let us know and we'll look into it.